Texas students will now be graded by an automated scoring engine on their state tests. The Texas Education Agency (TEA) is rolling out a new system for grading open-ended test questions, following a redesign of the State of Texas Assessments of Academic Readiness (STAAR) exams in 2023 to incorporate more open-ended questions and fewer multiple choice questions.

The new scoring system utilizes natural language processing, a type of artificial intelligence, although the TEA claims that their new system “is not artificial intelligence.”

The scoring system is trained using 3,000 human-graded responses and tries to find patterns in the responses to arrive at the human score. A quarter of the responses will still be graded by humans, though the remaining 75% will no longer be reviewed.

Due to the redesigned test, the TEA claims that maintaining full human scoring would have cost them $15 million more per year. Thanks to the new scoring system, the TEA plans to hire fewer than 2,000 scorers this year as opposed to about 6,000 last year.

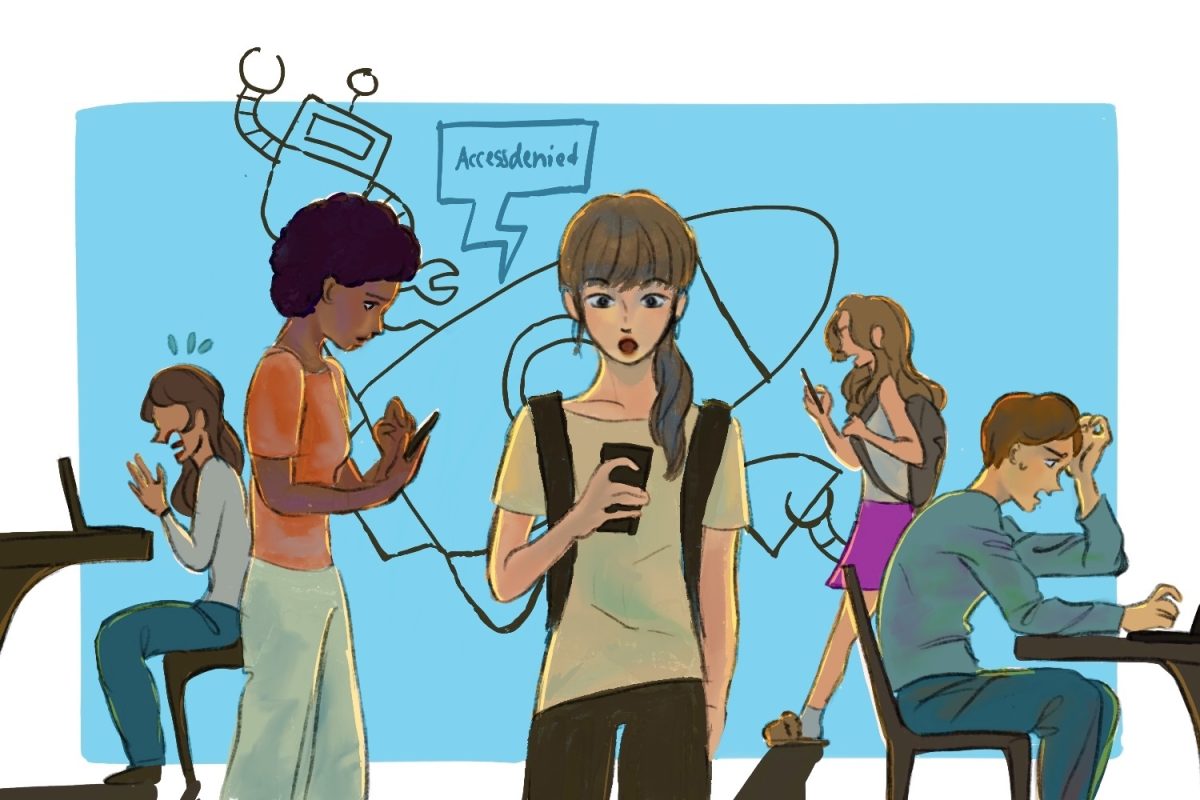

Despite the reduced cost, many parents and educators are concerned about the change. This concern is only compounded by STAAR test results’ potential to lead to school closures and that scoring well on the test is a common method to fulfill a high school graduation requirement.

“There ought to be some consensus about whether this is a good thing, or not a good thing, a fair thing or not a fair thing,” said Kevin Brown, executive director for the Texas Association of School Administrators, last week. Brown was also concerned about the new system not rewarding originality.

During a pilot test for the automated scoring engine last year in December, Lori Rapp, the superintendent at Lewisville Integrated School District observed a “drastic increase” in the number of zeroes on open-ended questions.

The TEA has said that this difference is due to differences in requirements rather than being from different grading methods.

Overall, there is a lack of belief in the system, especially with the shift to a technology that, for many, remains untrustworthy.

“It’s not something parents or teachers are going to trust,” said Carrie Griffith, policy specialist for the Texas State Teachers Association, last week.

The shift to automated grading in Texas has raised questions about people’s readiness for automated grading as a growing number of states join the trend.